The current generation of large language models is fundamentally unsuited for deployment in fully autonomous weapons systems. Built to predict and generate text rather than perceive and act in complex physical environments, these systems lack the embodied intelligence, deterministic control, and safety validation required for lethal autonomy. Recent tensions between Anthropic and the U.S. Department of Defense, including reports that Anthropic told defense officials its Claude model is not ready for fully autonomous weapon use, reinforce that even frontier AI developers recognize these limitations.

LLMs are trained primarily on large-scale text datasets. Their strengths include language reasoning, summarization, planning assistance, and code generation. They do not possess native sensorimotor grounding. They do not directly perceive the physical world. And critically, they operate probabilistically, generating outputs based on statistical likelihood rather than validated physical state awareness.

In enterprise automation, this probabilistic nature can be managed with oversight layers, human supervision, and constrained task design. In lethal systems, however, uncertainty thresholds must be dramatically lower. A fully autonomous weapon requires deterministic performance, real-time sensor fusion, adversarial robustness, and validated fail-safe mechanisms. Current LLM architectures do not meet those requirements.

Text Prediction Is Not Physical Intelligence

There is a structural mismatch between language models and physical autonomy.

Physical AI systems must integrate perception, localization, mapping, motion planning, control theory, and real-time feedback loops between sensors and actuators. They operate in continuous state spaces under noisy, dynamic, and adversarial conditions. LLMs, by contrast, operate in discrete token sequences optimized for next-word prediction.

Anthropic’s reported position that Claude is not suitable for fully autonomous weapon deployment reflects this architectural reality. Conversational fluency or benchmark reasoning performance does not translate into reliable real-world actuation.

LLMs may support decision assistance, logistics planning, intelligence synthesis, or operator interfaces. But they are not validated for millisecond-level control of physical systems under battlefield uncertainty. Conflating cognitive assistance with embodied autonomy risks policy overreach.

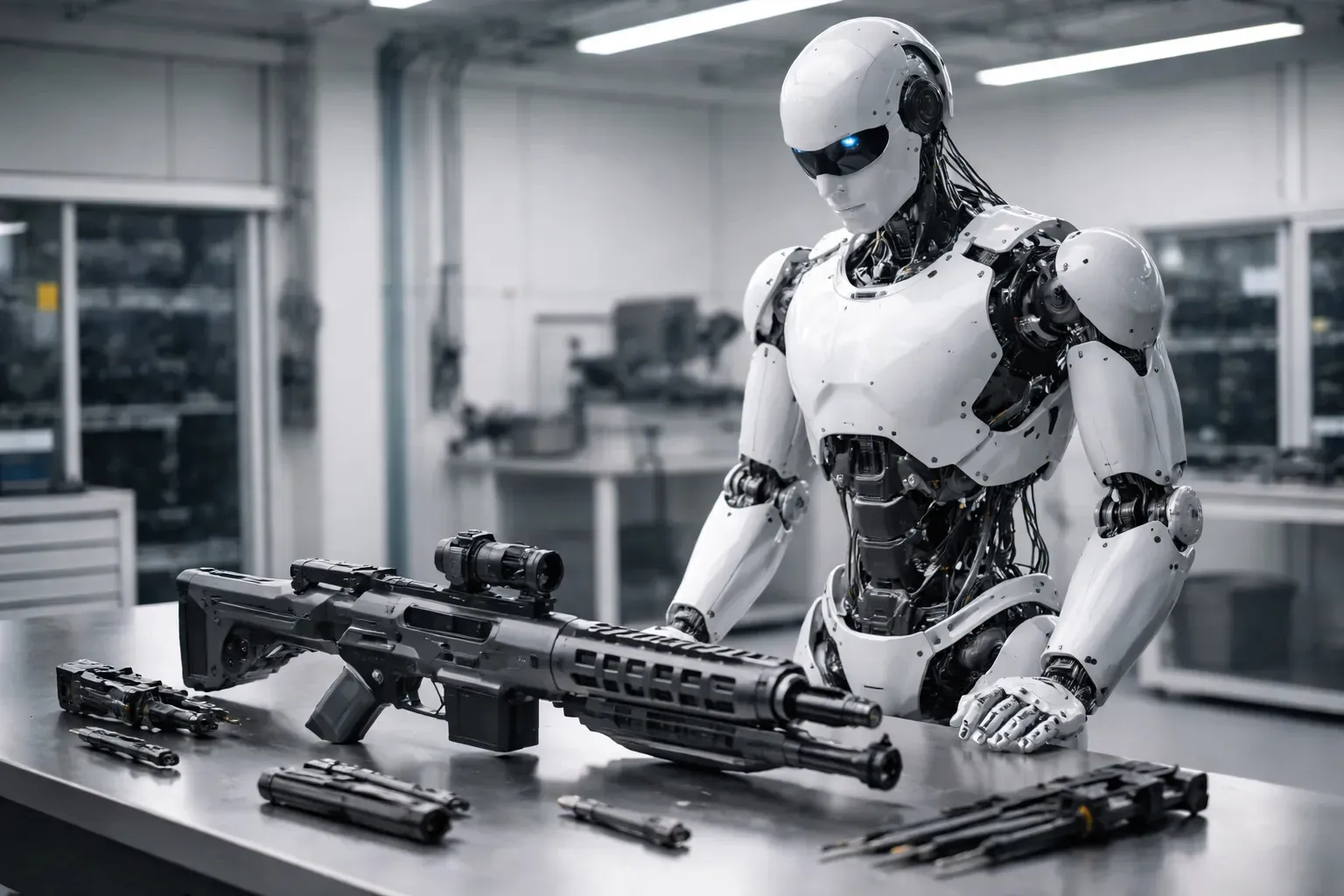

The Physical AI Gap and the Role of Humanoid Robotics

The deeper issue is that the AI industry has not yet solved general-purpose physical intelligence.

Developing embodied AI requires systems that can manipulate objects, maintain balance, adapt to environmental variability, interpret spatial context, and learn from physical interaction. That training process cannot be derived from text alone. It requires exposure to the physical world.

Humanoid robotics represents the most scalable pathway to developing this form of physical AI. Unlike fixed industrial robots or narrow automation systems, humanoids are designed to operate in environments built for humans. Warehouses, factories, hospitals, logistics centers, and infrastructure sites are structured around human form factors and mobility constraints.

Training AI through humanoid platforms allows models to learn manipulation, navigation, dexterity, and human-environment interaction within real-world constraints. This embodiment creates grounded world models that extend beyond symbolic reasoning.

If AI is to move safely and reliably into the human physical world, humanoid systems offer the most economically viable bridge. They provide a standardized hardware platform for collecting physical interaction data at scale while operating in enterprise environments that demand safety compliance and measurable productivity.

Importantly, even humanoid robotics today remains in early commercialization phases. Industrial deployments are focused on repetitive labor augmentation, warehouse support, and controlled factory environments. These systems are still undergoing reliability validation, safety certification, and cost optimization.

If general-purpose physical AI is not yet mature for warehouse shift replacement at scale, it is even further from satisfying the requirements of fully autonomous weapons.

Safety, Validation, and Strategic Risk

Fully autonomous weapons would require validated perception stacks capable of distinguishing combatants from civilians, interpreting intent, operating under electronic interference, and complying with international humanitarian law. No current LLM-based system has demonstrated that level of reliability in physical deployment.

Additionally, LLMs remain vulnerable to adversarial prompts, data poisoning, and contextual manipulation. In enterprise applications, such vulnerabilities may result in workflow errors. In lethal systems, the consequences would be irreversible.

The strategic danger lies in mistaking linguistic competence for embodied intelligence. Benchmark scores and fluent outputs can create a perception of general intelligence that outpaces physical capability.

Defense procurement decisions must be grounded in validated systems engineering, not model demos. Policymakers should distinguish between language AI as a support tool and embodied physical AI as an autonomous actor. These are fundamentally different technical domains.

A More Grounded Path Forward

If the goal is to responsibly advance physical AI, investment should prioritize embodied systems development, safety standards, regulatory frameworks, and enterprise-grade humanoid deployment. Physical AI will mature through controlled industrial environments, data collection at scale, and rigorous testing under real-world constraints.

Humanoid robotics is the bridge between digital intelligence and the human physical world. But that bridge is still under construction.

Until embodied AI systems demonstrate consistent reliability, safety certification, and economic viability in enterprise settings, deploying LLM-driven fully autonomous weapons is technologically premature and strategically destabilizing.

Anthropic’s reported position that Claude is not ready for fully autonomous weapon use reflects a recognition of these realities. The responsible course for policymakers and defense institutions is to acknowledge the physical AI gap, not attempt to bypass it.